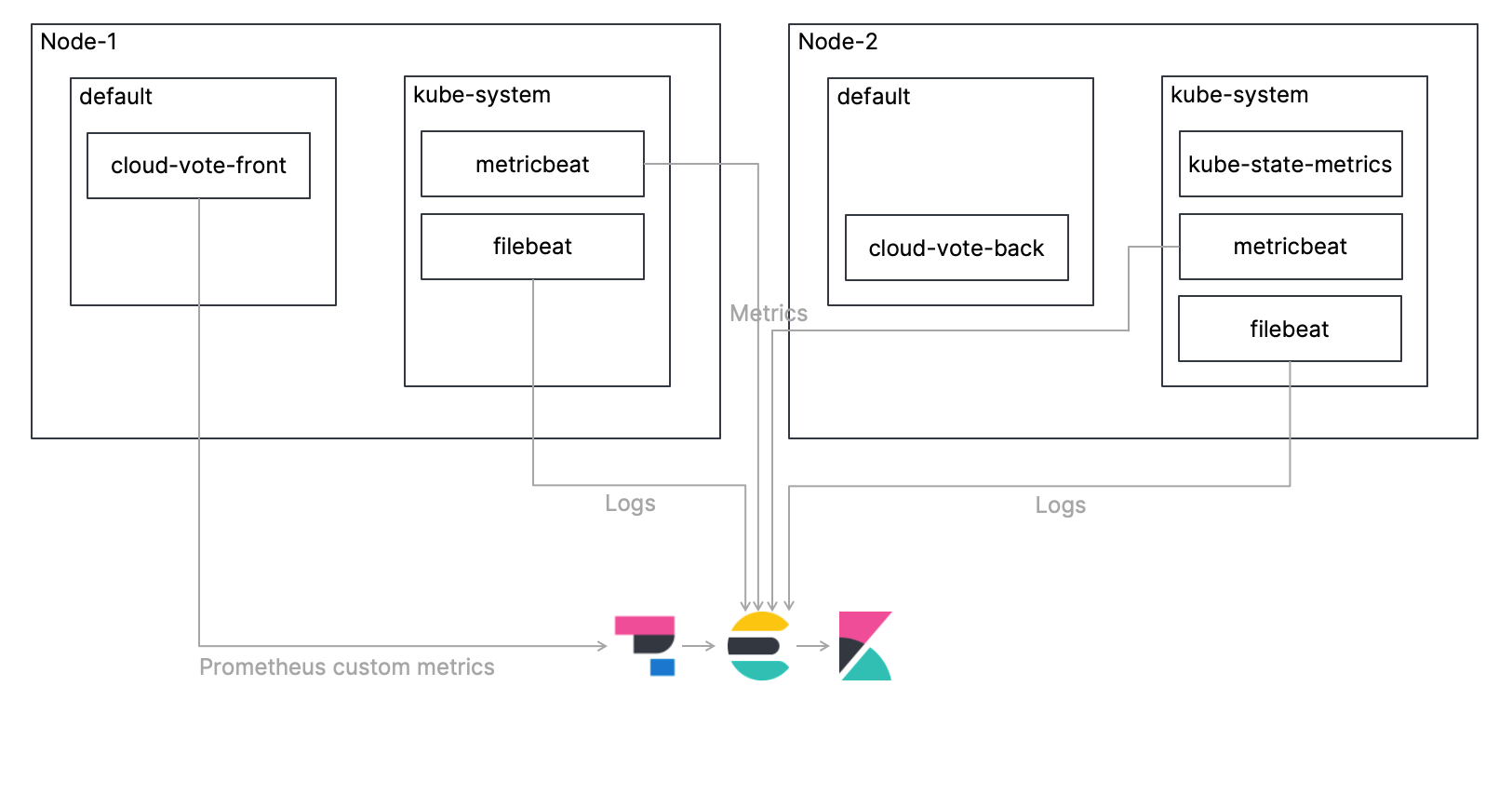

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME Root$ kubectl -n logging get pods -o wide This makes sure that our Filebeat DaemonSet schedules a pod on the master node as well. Once the Filebeat DaemonSet is deployed we can check if our pods get scheduled properly. Please note the following setting in the manifest:.In case you already have an Elasticsearch cluster running the env var should be set to point to it. We have set the env var ELASTICSEARCH_HOST to elasticsearch.elasticsearch to refer to the Elasticsearch client service which was created in part 1 of this article.We are mounting this directory from the host to the Filebeat pod and then Filebeat processes the logs according to the provided configuration. Logs for each pod are written to /var/log/docker/containers.# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

MountPath: /usr/share/filebeat/filebeat.yml # If using Red Hat OpenShift uncomment this:

"-c", "/usr/share/filebeat/filebeat.yml", Use the manifest below to deploy the Filebeat DaemonSet. This is helpful when we try to filter logs specific to a particular worker node.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed